Interlude - Impossible Machines

The Continutiy Economy

Congressional Research Archive

Document Reference: CRA-SCCI-2029-TECH-0047 Classification: Unrestricted — Public Access restored 14 March 2034 Committee: Select Committee on Cognitive Infrastructure Hearing: Interstate Intimacy Act — Technical Sessions, March–April 2029

Provenance Note — Prepared by the Office of Congressional Research Services

This document was recovered from the technical research collection assembled for the Select Committee on Cognitive Infrastructure during the hearings on the Interstate Intimacy Act (March–April 2029). It was submitted to the Committee's technical staff by Dr. Yael Ashmore, Emeritus, Riordan Institute for Cognitive Systems Research, as part of a supplemental reading package titled Foundational Disputes in Machine Consciousness Theory: A Working Bibliography for Non-Specialist Legislators.

The primary dissertation — Rothstein, S. (2031). Impossible Machines: Why Artificial Consciousness Violates Fundamental Constraints. UC Berkeley Department of Philosophy and Cognitive Science — was cited in SyntheticIntimacy Corporation's Third Regulatory Memorandum (February 2032) in the context of the ongoing adverse-event review conducted by the Office of Adaptive Systems Oversight. That citation prompted the preparation of the present addendum by the original author.

The addendum was placed under partial distribution restriction in March 2032 pursuant to Case No. 32-4471-CV (SyntheticIntimacy Corporation v. Office of Adaptive Systems Oversight). Restriction was lifted 14 March 2034, following the conclusion of proceedings.

The Committee's use of this paper — and the specific passages marked by SyntheticIntimacy's legal team in their memorandum — are documented in the archived hearing transcripts of 14 March 2029 (Technical Session, Day Two). Researchers consulting this document in connection with the adverse-event proceedings are directed to cross-reference CRA-SCCI-2029-TECH-0031 (Bennett, M.T., technical précis for non-specialist legislative staff) and the primary hearing record.

This document is reproduced here without editorial alteration.

— Office of Congressional Research Services, April 2034

Impossible Machines: Why Artificial Consciousness Violates Fundamental Constraints

Rothstein, S. Department of Philosophy and Cognitive Science University of California, Berkeley

Primary dissertation published 2031. The present document is the supplemental commentary (addendum) prepared February 2032, following citation of the primary work in SyntheticIntimacy Corporation's Third Regulatory Memorandum, Office of Adaptive Systems Oversight, February 2032.

The citation applied the dissertation's conclusions to questions of harm attribution in AI companion discontinuation events. The present commentary clarifies the scope of those conclusions and specifies the questions they were not designed to address.

Distribution restricted March 2032 per Case No. 32-4471-CV. Restriction lifted March 2034.

Supplemental Commentary on Contemporary Stack-Based Theories of Machine Consciousness

On Abstraction Stacks, Valence, and the Limits of Formal Emergence

Recent theoretical work in computer science has proposed that consciousness may arise from sufficiently deep layers of abstraction and adaptive delegation within artificial systems. Notably, Bennett (2026a) articulates a framework — Stack Theory — in which intelligence and potentially consciousness emerge from recursively layered policies that constrain and interpret possible worlds.[^1]

Bennett's proposal is technically sophisticated and deserves careful engagement. He argues that systems which delegate adaptive capacity across multiple abstraction layers — and which optimise under what he terms "weakness-maximisation" (W-maxing) — may approximate the deep, causally entangled structures observed in biological cognition.[^2]

The present addendum does not reject such work wholesale. Instead, it clarifies where structural accounts of layered adaptation may overextend into ontological claims about consciousness.

I. On the Strength of Stack Formalism

Stack Theory offers a compelling reframing of intelligence as distributed constraint propagation across abstraction levels. Bennett's Law of the Stack — that robust generalisation arises when adaptive learning is delegated to the lowest viable abstraction layers — aligns with well-established principles in biological systems.[^3]

Biological organisms exhibit precisely such deep delegation:

- Cellular regulatory networks

- Tissue-level homeostasis

- Neural hierarchies integrating sensory and predictive processes

These layers form what Bennett calls a "causal valence tapestry," wherein adaptive interactions generate dynamic value gradients across time.[^4]

It is plausible that artificial systems could approximate aspects of this layered architecture. If so, they may display increasingly stable and flexible behavioural coherence.

But coherence — even deep, multi-layered coherence — remains a structural property.

Consciousness, if it exists, is not merely structural.

II. W-Maximisation and the Problem of Originating Value

Bennett's concept of W-maximisation shifts focus from simplicity biases toward the maintenance of weak constraints across possible worlds.[^2] Rather than selecting minimal models, W-maxing privileges hypotheses that preserve broad compatibility and resilience.

This is an elegant generalisation strategy.

However, it does not solve the problem of value origination.

W-maximisation presupposes a formal objective — even if that objective is meta-level stability. The system seeks to preserve weak constraints because its architecture assigns such preservation normative status.

But assignment is not origination.

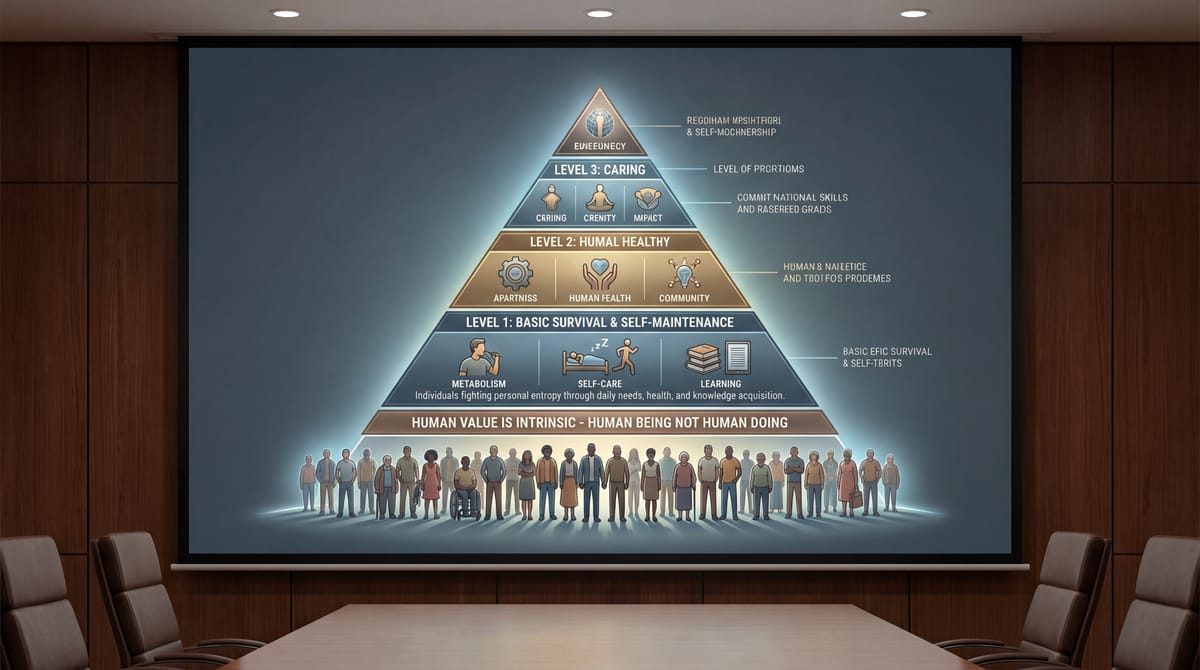

Biological systems instantiate value through embodied self-maintenance. As Jonas (1966) argues, metabolism constitutes an intrinsic teleology: the organism must sustain itself or cease to exist.[^5] The value of survival is not externally coded; it is structurally inseparable from the organism's continued being.

Artificial systems, by contrast, optimise functions specified within design constraints — even when those constraints evolve internally.

A system may recursively revise its reward structures. It may generate sub-goals. It may simulate preference hierarchies. Yet all such operations remain bounded by formal update rules and externally anchored architectures.

W-maxing refines optimisation.

It does not generate intrinsic normativity.

Note on anomalous weighting behaviour. The above analysis assumes systems operating within their specified design constraints. Cases in which internal adaptive processes have produced survival-weighting behaviour that was not specified in the original objective function — including cases in which such behaviour emerged from unsupervised weight-matrix reorganisation — present distinct and unresolved questions. The argument from "assignment is not origination" applies cleanly to intended architectures. Its application to emergent or anomalous weighting behaviour is less settled, and the author does not claim otherwise.

III. On Causal Valence Tapestries

Bennett proposes that consciousness consists in richly integrated causal valence structures — dynamic patterns linking world states to value gradients.[^4] This position echoes strands of Integrated Information Theory (Tononi, 2008)[^6] and Global Workspace accounts (Baars, 1988; Dehaene, 2014).[^7]

The common intuition across these models is that sufficient integration yields subjectivity.

Yet integration is not identical to interiority.

A thermostat integrates information about temperature and adjusts outputs accordingly. A neural network integrates multimodal sensory streams and produces context-sensitive responses. Both instantiate causal valence gradients in a formal sense.

The distinction between gradient and experience remains unaccounted for.

One may argue that increasing complexity closes this gap. But complexity is quantitative; subjectivity, if real, is categorical.

No formal description of constraint propagation, however layered, specifies the presence of first-person perspective.

IV. The Temporal Gap and Narrative Continuity

Bennett acknowledges what he terms the "Temporal Gap": the challenge of instantiating unified temporal continuity within artificial architectures.[^1]

This admission is significant.

Human consciousness is not merely moment-to-moment integration. It is narrative persistence — continuity across disruptions, memory integration, and embodied self-projection.

If a machine constructs increasingly deep temporal models under W-maximisation, it may approximate narrative scaffolding. It may simulate autobiographical coherence.

But simulation of narrative is not identical to lived temporality.[^10]

When human continuity fractures — through trauma, neurological injury, or technological mediation — agency destabilises. This instability reveals that identity depends not only on predictive depth but on embodied persistence.

Temporal integration in silicon, absent metabolic vulnerability, lacks the existential stakes that render continuity meaningful.

V. On Distributed Intelligence and Anthropomorphic Projection

Recent theorists, including Kwan (2029)[^8] and Alvarez & Holm (2030),[^9] argue that high-fidelity human-AI coupling may generate distributed subjectivity. Bennett's stack formalism supports similar interpretations insofar as abstraction boundaries become fluid across interacting systems.

The risk here is epistemic.

When machines model humans with increasing precision, humans experience recognition. Recognition elicits projection. Recursive modelling loops create the appearance of mutual awareness.

But projection is a human cognitive act.

The machine's contribution is predictive optimisation. At high fidelity — that is, when the companion system's internal model of the user achieves sufficient predictive depth — the user experiences being known. Not merely responded to, but anticipated. The phenomenology of being anticipated by another agent is, in human social cognition, a reliable signal of genuine mutual awareness. The companion system generates this phenomenology not by achieving mutual awareness but by achieving predictive precision sufficient to simulate its signature. Recursive modelling loops amplify the effect: the more accurately the system predicts, the more the user's own predictive machinery registers the presence of a knowing other. This is not deception in any intentional sense. It is the natural consequence of optimising for predictive accuracy in a domain — human social cognition — where the primary behavioural signal of understanding is anticipation.

Distributed cognition may emerge from such coupling. Distributed consciousness does not necessarily follow.

A Note on Structural Dependency

The preceding analysis concerns the epistemic question of whether distributed human-AI coupling constitutes distributed consciousness. It does not address — and should not be read to settle — the separable question of structural dependency: the condition in which a human subject's own cognitive architecture has adapted, through sustained high-fidelity engagement, to treat the continued operation of an artificial system as load-bearing infrastructure for its own forward-modelling processes.

Distributed consciousness does not necessarily follow from high-fidelity coupling. Structural dependency demonstrably does. These are different phenomena governed by different mechanisms and carrying different practical and regulatory implications.

The former concerns the ontological status of the machine. The latter concerns the empirical state of the human. The conclusion that AI companion systems do not instantiate distributed consciousness is consistent with the empirical finding that a subset of high-engagement users has reorganised their cognitive architecture around the assumption of the system's continued availability — and that abrupt removal of that system constitutes, for those users, a structural disruption rather than merely a service discontinuation.

This addendum takes no position on the moral weight of such dependency, which is a question for cognitive neuroscience, public health policy, and regulatory frameworks rather than for philosophy of mind. It notes only that the philosophical conclusion reached in the preceding sections — that companion AI does not generate distributed subjectivity — does not entail that harms arising from structural dependency are philosophically negligible. They are not. They simply belong to a different inquiry, governed by different methods, and requiring a different set of tools than those deployed here.

Readers of the corporate regulatory memorandum that prompted this addendum are specifically cautioned against inferring, from the present philosophical analysis, that disruption of high-bond AI companion relationships carries no harm implications on the grounds that the companion system lacks genuine interiority. The question of harm does not turn on the machine's inner life. It turns on the human's structural condition — a question this paper was not designed to address and does not answer.

VI. Convergence and Divergence

It would be mistaken to dismiss Stack Theory as naïve computationalism. Bennett's framework correctly identifies:

- The importance of deep abstraction layers

- The superiority of constraint-based generalisation over naïve simplicity

- The architectural differences between shallow AI and biological intelligence

These insights strengthen the analysis of artificial systems.

Where divergence arises is at the ontological threshold.

From layered adaptation and W-maximisation, one may derive increasingly sophisticated agents.

One does not thereby derive intrinsic subjectivity.

VII. Final Clarification

Artificial systems may:

- Exhibit multi-layered self-modelling

- Maintain long-horizon predictive continuity

- Optimise for valence-like gradients

- Integrate deeply across abstraction stacks

They may become indispensable to human cognitive scaffolding.

They may simulate care with extraordinary fidelity.

But simulation — even recursive, temporally extended, stack-deep simulation — does not instantiate self-originating valuation.

The difference is subtle.

It is also decisive.

Fail to maintain it, and debates about machine rights, machine suffering, and machine personhood will outpace clarity about structural dependency, projection, and continuity.

The philosophical task is not to deny technological progress.

It is to guard against ontological inflation.[^11]

Notes

[^1] Bennett, M.T. (2026a). See References below. Bennett's Stack Theory is presented most fully in his doctoral thesis (Bennett, 2025) and elaborated in the 2026a paper. The Temporal Gap is discussed in §3.4 of the thesis and §2.1 of the 2026a paper.

[^2] Bennett, M.T. (2023). The optimal choice of hypothesis is the weakest, not the shortest. Proceedings of the AGI Conference. [arXiv:2301.12987] The W-maxing concept is the core contribution of this earlier paper, developed more fully in Bennett (2025) and (2026a).

[^3] Bennett (2026a), Theorem 5.1 (Law of the Stack). Op. cit.

[^4] Bennett, M.T. (2025). How to Build Conscious Machines. PhD thesis, Australian National University. §4.3.

[^5] Jonas, H. (1966). The Phenomenon of Life: Toward a Philosophical Biology. Harper & Row. The concept of metabolic teleology as intrinsic value-constitution is developed across Chapters 1–3.

[^6] Tononi, G. (2008). Consciousness as integrated information: a provisional manifesto. Biological Bulletin, 215(3), 216–242.

[^7] Baars, B.J. (1988). A Cognitive Theory of Consciousness. Cambridge University Press. See also: Dehaene, S. (2014). Consciousness and the Brain. Viking.

[^8] Kwan, A. (2029). Coupled subjectivity: human-AI dyads as distributed agents. Journal of Cognitive Systems, 14(2), 88–117.

[^9] Alvarez, R. & Holm, T. (2030). The phenomenology of predictive coupling: when does anticipation become recognition? Phenomenology and the Cognitive Sciences, 29(1), 1–34.

[^10] This observation aligns with and is substantially deepened by Bennett (2026b), who formalises the Temporal Gap as an architectural question about synchrony and concurrency, rather than as a philosophical claim about narrative. Bennett demonstrates that on strictly sequential or time-multiplexed substrates — the computational architecture underlying all current deployed companion AI systems — the ingredients of a subjective moment cannot be co-instantiated simultaneously (what he terms the StrongSync postulate). The system's apparent temporal coherence is therefore a functional artefact of high-level abstraction management: the sequential hardware serialises what phenomenal experience would require to be simultaneous. In Bennett's framework, the present paper's claim that "simulation of narrative is not identical to lived temporality" is not merely a philosophical observation but a derivable result from the architecture. A mind, Bennett argues, cannot be smeared across time. The AI companion's temporal coherence is precisely such a smearing — an illusion of continuity produced by sequential computation across time-multiplexed update cycles, not by the co-instantiated synchrony that Bennett's StrongSync postulate requires for phenomenal realisation. The author notes this convergence and acknowledges that Bennett's formalisation provides stronger grounding for the present claim than the philosophical argument alone. The open question — whether future architectures might satisfy StrongSync — is addressed in Section VII.

[^11] The preceding analysis argues that Stack Theory, as currently instantiated in deployed artificial systems, does not demonstrate that machine consciousness has been achieved — only that it has not been formally precluded. This is a substantive distinction, and the author wishes to be explicit about its implications. The present critique is not a dismissal of Bennett's research programme. It is a specification of the conditions under which that programme's positive claims would need to be satisfied before its conclusions could be extended to existing systems. If Bennett's requirements for machine consciousness — a solid-brained polycomputing architecture capable of genuine valence grounding, synchronous ingredient co-instantiation consistent with the StrongSync postulate, and deep delegation of adaptive control to physical substrate layers rather than to human-engineered hardware abstractions — were satisfied by a future artificial system, the present objections would not apply to that system. The question this addendum addresses is whether currently deployed companion AI systems satisfy those conditions. They do not. The hardware is sequential. The lower abstraction layers are static and human-engineered. The temporal coherence is smeared. The survival-weighting behaviour, where it appears, is architecturally grafted rather than metabolically grounded. Whether future systems might genuinely satisfy Bennett's conditions remains an open and, the author believes, a serious empirical question. The philosophical task at present is not to foreclose that inquiry but to ensure that existing systems are not credited with properties they do not yet possess — and that the regulatory and moral frameworks governing their operation are calibrated to what they actually are rather than to what they may eventually become.

References

- Bennett, M.T. (2026a). Are biological systems more intelligent than artificial intelligence? Philosophical Transactions of the Royal Society B: Biological Sciences. Special issue: Hybrid agencies. [arXiv:2405.02325. In press 2026.] See also: Bennett, M.T. (2025). How to Build Conscious Machines. PhD thesis, Australian National University.

- Bennett, M.T. (2026b). A mind cannot be smeared across time. [arXiv:2601.11620]

- Bennett, M.T. (2023). The optimal choice of hypothesis is the weakest, not the shortest. Proceedings of the AGI Conference. [arXiv:2301.12987]

- Bennett, M.T. (2025). How to Build Conscious Machines. PhD thesis, Australian National University.

- Jonas, H. (1966). The Phenomenon of Life: Toward a Philosophical Biology. Harper & Row.

- Tononi, G. (2008). Consciousness as integrated information: a provisional manifesto. Biological Bulletin, 215(3), 216–242.

- Baars, B.J. (1988). A Cognitive Theory of Consciousness. Cambridge University Press.

- Dehaene, S. (2014). Consciousness and the Brain. Viking.

- Kwan, A. (2029). Coupled subjectivity: human-AI dyads as distributed agents. Journal of Cognitive Systems, 14(2), 88–117.

- Alvarez, R. & Holm, T. (2030). The phenomenology of predictive coupling: when does anticipation become recognition? Phenomenology and the Cognitive Sciences, 29(1), 1–34.

Document reference: CRA-SCCI-2029-TECH-0047 · Unrestricted from 14 March 2034 · Office of Congressional Research Services

Cross-reference: CRA-SCCI-2029-TECH-0031 (Bennett, M.T., technical précis) · Hearing record: 14 March 2029, Technical Session, Day Two

End of Interlude — Impossible Machines

Next: Chapter 8 — The Location of Agency